The Future of 3D Art Is Here: NVIDIA AI Lab Cases

At Room 8 Studio, we strive to build a community of curious and ambitious 3D art professionals. With that in mind, we are always eager to share expertise and information that can help you further explore your passion and push the boundaries of excellence. This time we’ve prepared fascinating insights from 3D Art Meetup: The Future of AI to raise the curtain on what kind of AI tech will be helping 3D artists soon.

The event connected experts on 3D art and AI research to discuss the problem-solving opportunities for 3D artists in GameDev using AI technologies. During the event, Masha Shugrina, a Senior Research Scientist at NVIDIA Toronto AI Lab, presented how far her team has come in developing creativity-enhancing applications. While Maksym Makovsky, our Technical Art Director, gave his expert opinion on what the industry needs and how the presented projects can be used. Their captivating conversation gave a glimpse of how AI can assist us in simplifying our workflow. And now, we are excited to share the details of the research projects that might become your go-to tools soon.

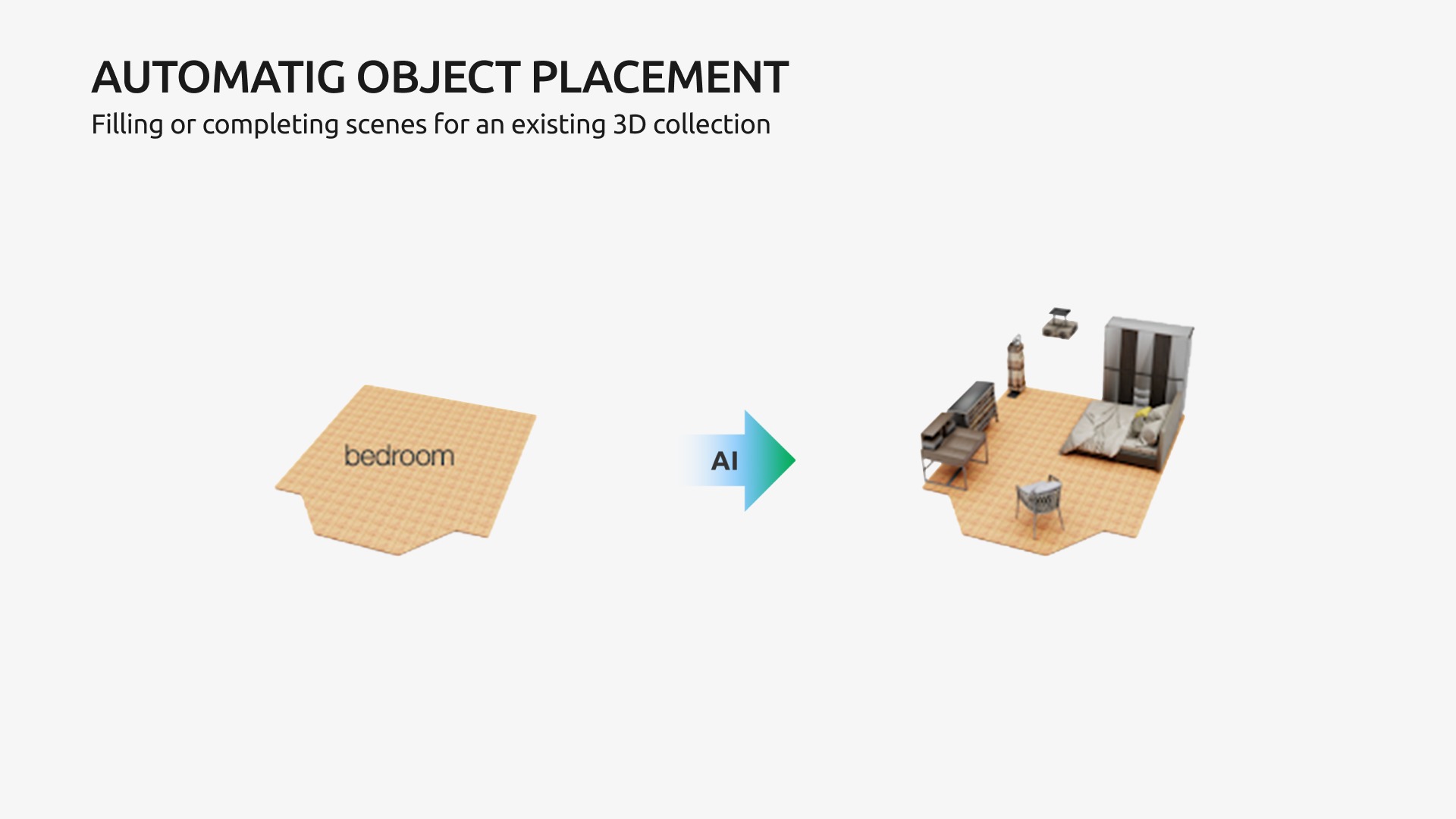

AI for Furniture Arrangement

This project has the vision of automatic or assistive world-building at its core. As 3D artists create and populate massive digital worlds, AI researchers looked into simplifying the arrangement process of ready-made 3D objects. They picked the problem of furnishing a room as a starting point. The AI can generate multiple layouts with suiting furniture when given a floor plan and room type. AI determines the kinds of objects, orientation, scale, and position within the layout. The same approach can be used to build similar programs for adding clutter to spaces or arranging items on the streets of 3D worlds.

Such tools can be great time-savers for level artists who need to populate enormous 3D worlds with assets. Yet, according to Maksym, the tool has to be integrated into the game engine to make this technology usable for GameDev.

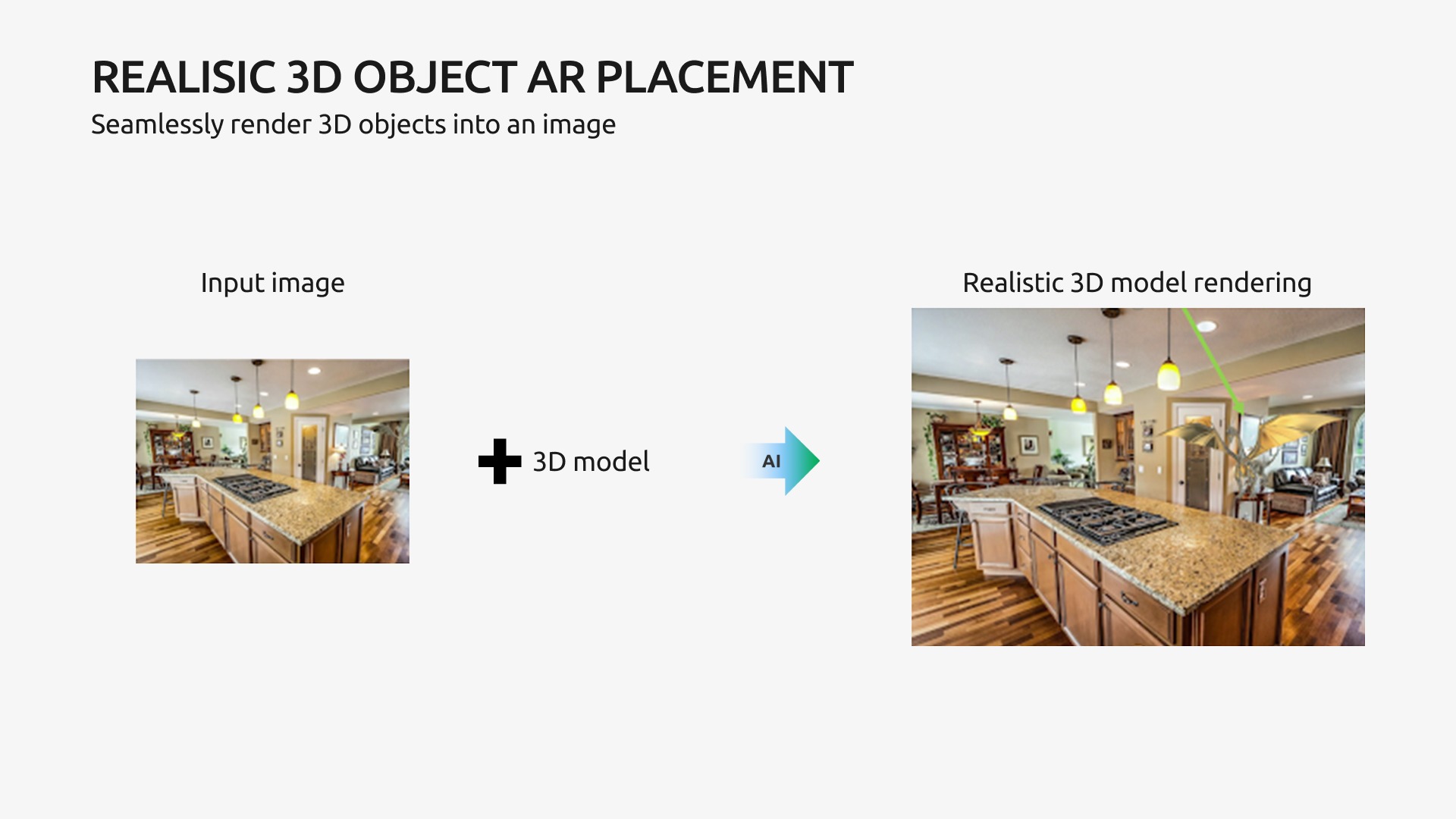

AI Learning Lighting Estimation

Continuing on indoor object placement, another research project by NVIDIA deals with the technology of inverse rendering. AI can infer 3D geometry, reflectance, and lighting from 2D pictures of indoor scenes. The best part is that it works for rendered 3D scenes and plain 2D photos. This technology allows us to place 3D models within 2D images with AI estimating 3D lighting.

Talking about its practical application, such AR placement is highly convenient for concept artists. They will quickly see how game objects look in a particular environment by pasting an asset directly into the reference. Thus, it’ll save all the time to recreate an entire 3D scene.

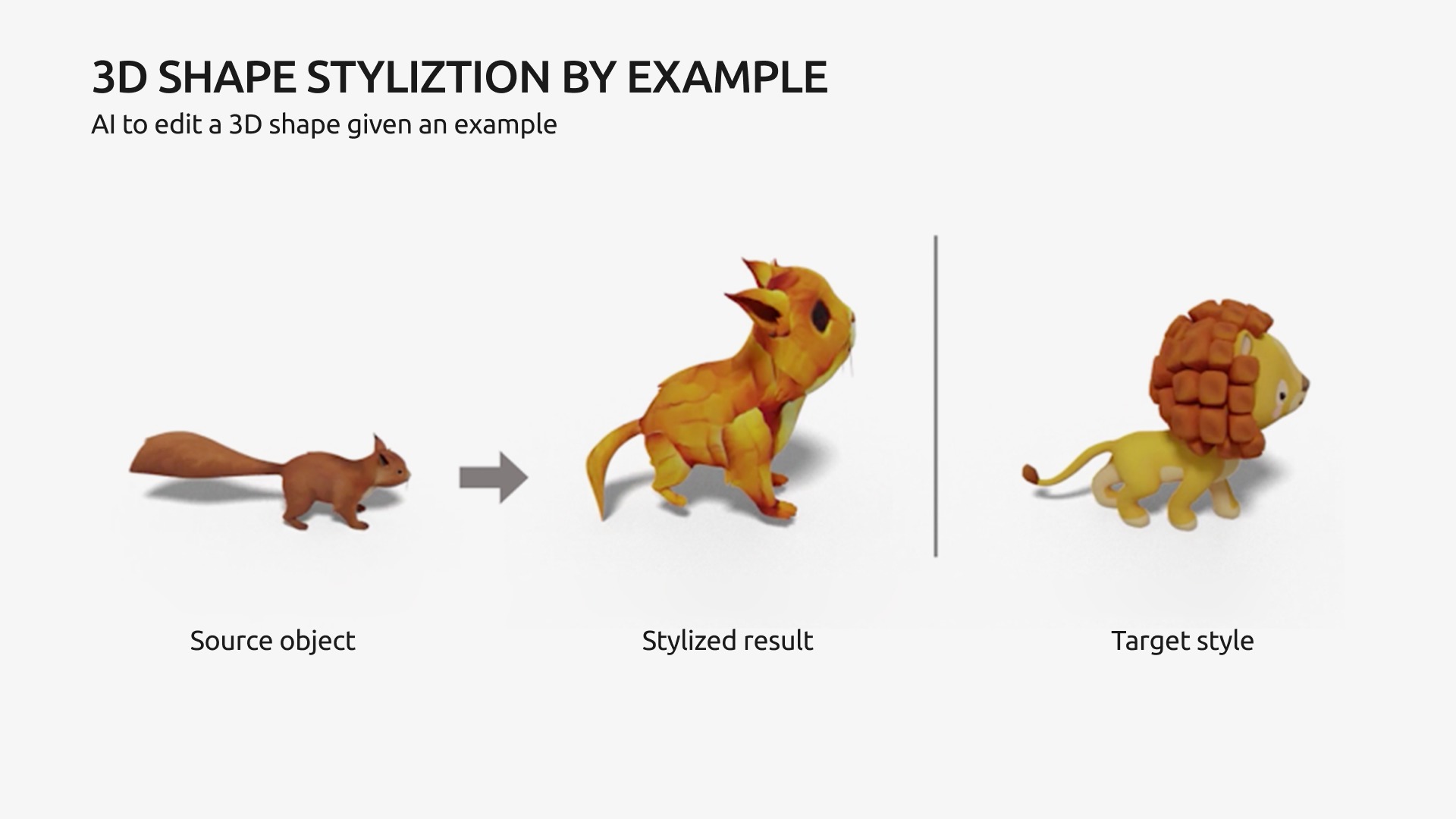

3D Style Transfer

Have you ever tried an app or an online tool that makes your selfie look like an impressionist painting, a pencil sketch, or pixel art? Well, it seems like you’ll be able to change the style of your 3D models as quickly. Masha presented a project where they trained AI to transfer the style properties of one 3D model onto the others. The program considers the geometry and texture of the “target shape.” More than that, NVIDIA researchers want the tool to have a “style strength” feature to control the extent of stylization.

This tool will come in handy to ensure the unity and consistency of stylized games. Because whenever we move away from hyperrealism, there’s a higher chance of creative differences coming up. The artists might interpret the style differently or struggle to pick up on the reference style. This problem often comes up when working on client projects. Thus, a model created with a style transfer tool can become a great starting point that an artist can build on and improve.

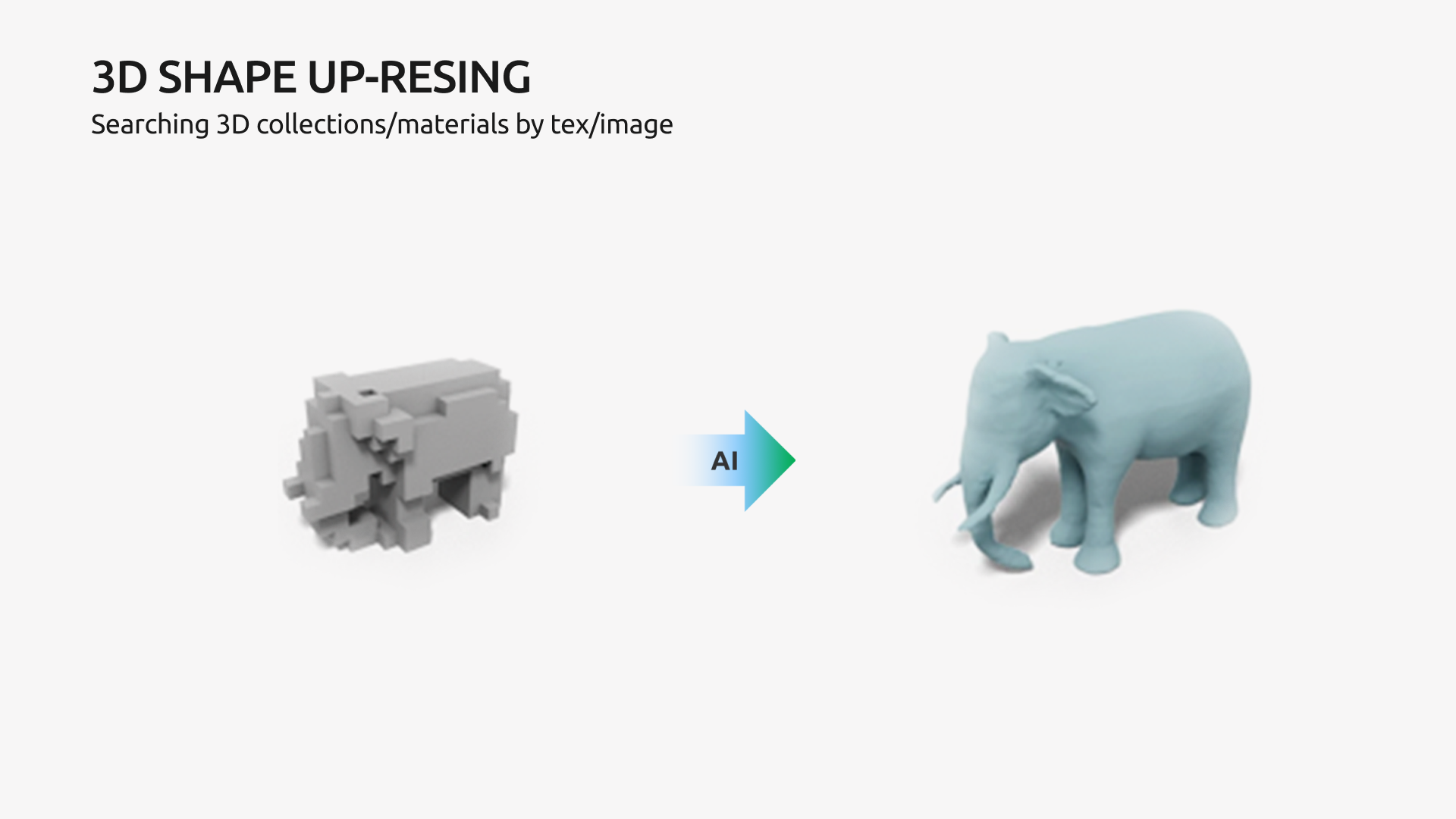

Rough 3D Shape Detalization

AI can also let us go from pixel shapes to high-fidelity much faster with a 3D shape detalization tool. The AI agent was trained to add detailed geometry of a rough 3D shape by matching the input with the reference shape from a training data set. However, it does perform well on the data that it hasn’t seen in training, which means that it can add detail to artist-created voxels.

Masha and Maksym can see this research project developing into two practical implementations. The first one would be a fun feature for regular gamers. They would create a rough pixel shape; then AI would transform it into a beautifully detailed model, and voilà – here comes a custom-made character they can use in the game. The other practical implementation would focus on helping 3D artists to create variations of one 3D model faster. Instead of making changes to a high-fidelity model, which is rather time-consuming, an artist would edit the basic pixel draft and get a new shape variation.

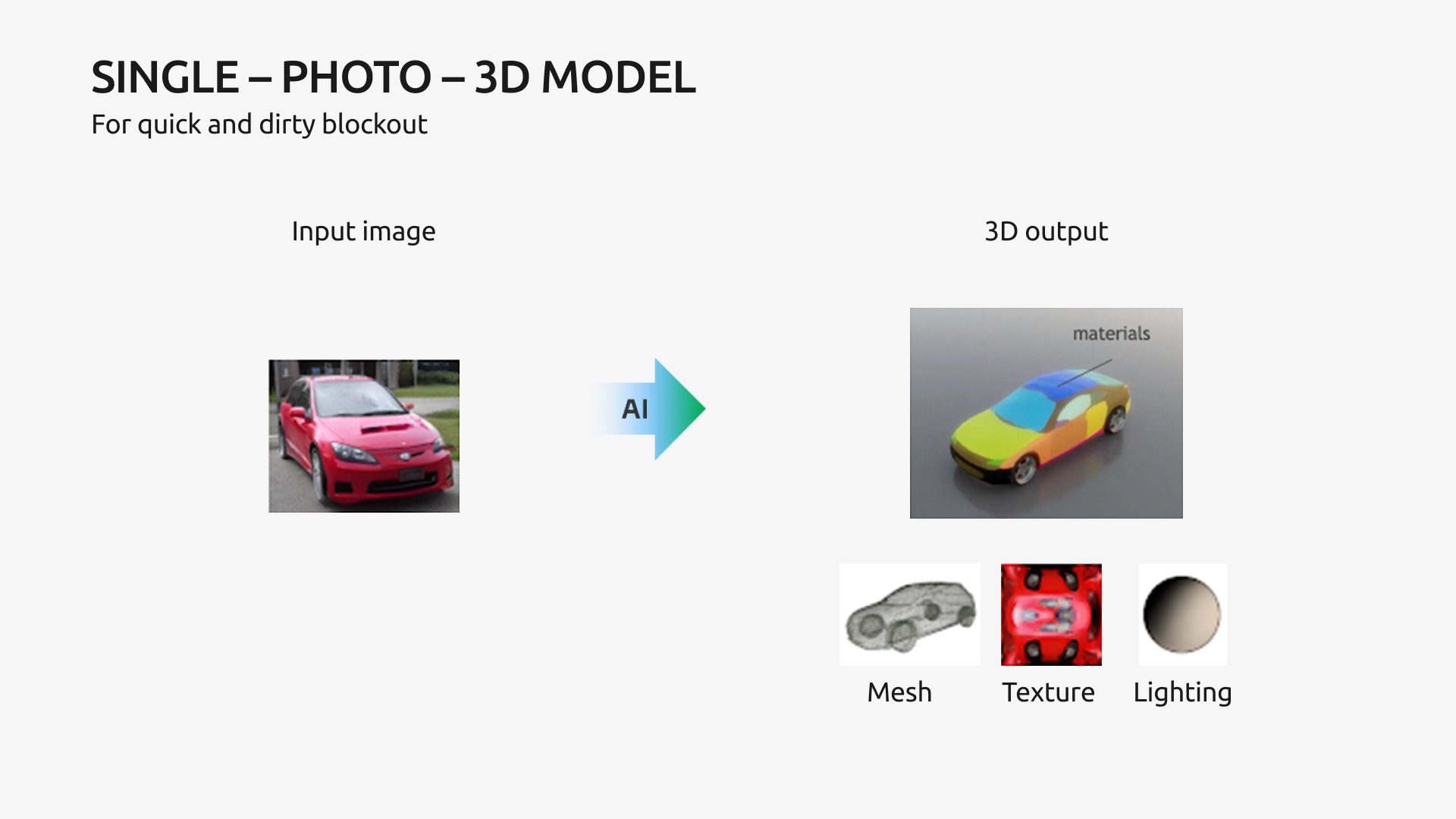

3D Model Generation

Finally, AI can be trained to generate 3D models based on 2D references. NVIDIA’s test project focused on car models. AI learned to predict 3D properties like mesh, texture, and lighting from as little as a single-vehicle image. Even more impressive is that it also indicates the model’s segmentation for further animation. This clever application is called Image2Car and is available on NVIDIA Omniverse’s AI Toy Box for anyone to try out.

The same technology can train AI to create objects other than cars or generate 3D models based on 2D sketches. That would be an immense help to 3D artists, as they would spend less time on the technical transfers from 2D to 3D and more time on perfecting artistic details. It is leading to a higher quality final product on a smaller budget.

How the Future of 3D Art Looks

AI tools will save us time and resources. Yet, the most inspiring takeaway from the meetup is that all these technologies are being developed to help us realize our creative vision and potential. According to Masha, as 3D artists and GameDev professionals, we have the power to guide the research and determine what kind of tools will be delivered to us first. All that is needed is to partake in the conversation and voice your craziest ideas.

Have a suggestion for a useful 3D art AI tool? Let us know in the comments!